Why Product Discovery Keeps Failing For Complex B2B SaaS

Product discovery treats three different activities as one. For complex B2B software, that confusion leads to expensive trial and error labelled as “best practice.”

Product discovery, the practice of figuring out what to build before you commit to significant investment, is one of the best ideas the software industry has produced.

The alternative is depressing and still far too common: someone in a meeting decides what sounds good, the team builds it for a few weeks or months, and then everyone watches customers shrug. Or worse, the team builds whatever the loudest customer asked for, and stitches requested features into a product that satisfies nobody.

The high failure rate of product development is, to a large extent, the result of building without adequate understanding of what customers actually need.

Thinkers like Marty Cagan, Steve Blank, Teresa Torres, Melissa Perri and Ash Maurya have done valuable work championing the idea that product teams should talk to customers, gather evidence, and validate their direction before burning through development budgets. Their core message is right: don’t guess. Go find out.

The goals of product discovery are hard to argue with.

Understand what customers need.

Confirm that your solution addresses those needs.

Make sure the technology can deliver.

Check that the business model works.

Do all of this before you bet the company on building something.

But there is a big problem with how product discovery is actually practised. Confusion is buried so deep in the concept itself that most people don’t realise it’s there.

Three things pretending to be one

When people talk about product discovery, they typically mean one integrated activity. But look more carefully at what product teams actually need to accomplish, and you will find three quite different activities conducted together as if they were one:

Discovering customer problems: understanding the real-world situations customers face, what they are trying to accomplish, and what makes outcomes good or bad

Inventing the solution: defining what the product should do from the customer’s perspective: its features, interactions, and behaviour

Investigating technology: researching suitable technology, studying feasibility, and figuring out how to implement the defined solution

These three activities differ in almost every way:

They have different objects: facts that exist in the customer’s world, versus a product concept that doesn’t exist yet, versus existing technologies and their capabilities.

They require different skills: investigative and analytical, versus inventive and design-oriented, versus engineering.

They draw from different sources: customers, versus the product team’s own reasoning, versus technical research and experimentation.

And they follow a logical sequence:

You need to understand the problem before you can invent a good solution.

You need to know what the solution should do before you can figure out if and how the technology can deliver it.

“A solution can only be as good as your understanding of the problem you’re addressing.”

–Paul Adams, Chief Product Officer, Intercom1

The table below summarises and contrasts these discovery activities.

What “problem” actually means

The conflation of these three activities runs deeper than methods. It begins with what mainstream advice means by the word “problem”.

Marty Cagan describes the problems product teams should solve as “unmet needs” in customers “frustrated with current offerings”: a better search engine, a better music service, a better navigation system.2

Teresa Torres defines opportunities as “customer needs, pain points or desires”.3

Ash Maurya’s problem interview script asks customers to rank their top three problems, with the goal of finding “evidence of monetizable pain”.4 Maurya himself has since walked back the ranking step, observing that “rankings are subjective” and that customers “don’t often understand their own problems well enough to rank them in the first place”.5

In every case, a “problem” is either a frustration with an existing solution or a desire for a better solution. Both descriptions are dependent on solutions.

This is important because three very different things get bundled under the word “problem”, and they aren’t at all the same.

Pain points are problems in a current solution, which solves some underlying problem. “We can never find the right information.” “This process is complicated.” A pain point is a symptom of poor fit between an existing tool (a solution) and the customer’s situation (the real underlying problem). It is not the underlying problem itself.

Feature requests are customers’ guesses at a better solution. “We need an AI version of X.” “We need automated data discrepancy management.” Desired features tell you a customer has an underlying problem worth solving, but a customer’s feature ideas are constrained by what they have experienced before and what they can imagine. The requested feature is rarely the best way to address the problem.

Underlying customer problems are different from pain points and desired features. They are concrete situations in which a particular customer is trying to accomplish something they value. A situation has structure: who is involved, what they are trying to do and why, what initiates the situation, what circumstances and dependencies apply, what constraints can’t be changed, and how the customer judges whether the result is good. Situations exist in the customer’s world regardless of what software exists, has existed, or might exist.

An insurance company must produce financial statements that comply with specific regulatory requirements, whether their accounting software supports insurance-specific needs or not. An emergency department must allocate resources during a patient surge whilst maintaining care quality for everyone else, regardless of what software is available. A retailer must determine the right assortment of products for each store given regional demand patterns, supplier constraints, and shelf space limits, with or without a planning tool.

Pain points and feature requests sit downstream of underlying problems. Customers complain about pain points because their existing tools don’t fit their situations. They describe feature requests because they are guessing at what might fit better. Both are signals that an underlying problem exists. Neither describes the situation in enough structural detail to reason about a good solution.

For more on the structural elements that make a problem description usable, see How to Define Customer Problems That Tell You What to Build.

Why product discovery iterates the three together

Several authors do separate problem investigation from solution definition in name. Cagan distinguishes “problem space” from “solution space”. Maurya separates problem interviews from solution interviews. Torres puts opportunities above solutions in her Opportunity Solution Tree.

The methods they recommend collapse the three layers back together in practice. Once a “problem” is conceived as a pain point or a desired solution, the only way to investigate it further is through a solution. There is nowhere else to look. So the unit of learning becomes a solution artefact: a prototype, an MVP, a demo, an experiment.

Cagan is explicit about this. In a 2020 article, he argues that “in so many of the best products and innovations, the breakthroughs happen precisely when we break down that wall between problem and solution space” and concludes that “in strong product companies, the vast majority of our product discovery work is spent, and needs to be spent, on the solution”.6

Given how Cagan thinks about problems, this is indeed correct. You cannot reason from “we cannot find the right information” to an optimal solution. You have to iterate on solutions, get feedback, and let your understanding deepen along the way. But the argument changes if a “customer problem” means something other than a pain point.

Look closely at what a prototype, an MVP, or a demo actually contains:

It assumes certain customer problems exist.

It embodies one particular solution to those assumed problems.

It implements that solution using whatever technology the team had at hand.

When customers react poorly, the team can’t tell which layer is at fault. Did they misunderstand the customer problem? Did they understand it but design a poor solution? Was the solution sound but the implementation too limited? The test doesn’t separate the three. So the team guesses, adjusts, builds again, tests again. This is the iteration cycle that modern product development holds up as best practice.

Consider what this would look like in construction.

A builder has a vague idea that a neighbourhood might need some kind of commercial space: maybe offices, maybe a clinic, maybe a restaurant, they don’t yet know. Instead of investigating what the neighbourhood actually needs (if anything), the builder constructs a building and invites potential tenants, like accounting firms, healthcare professionals and restaurant owners, to walk through it. “Does this work for you? No?” Maybe tear down a wall, add plumbing, and try again.

We recognise this as insane. A builder who starts construction without understanding what the building needs to accomplish would go bankrupt.

Yet something remarkably similar is standard practice in software development.

Yes, software prototypes are cheaper than physical structures. But for complex B2B software products, iterating the overall product direction and its structure is not cheap at all. Building even a basic version takes weeks or months. Getting customers to deploy it, use it in real conditions, and provide meaningful feedback takes even longer. A single wrong-direction cycle can burn a year and hundreds of thousands of euros.

The cheap-to-change argument applies only to individual components, not to the product as a whole. Refining individual screens, workflows or features iteratively is indeed cheap, just like changing the interior design of a room is cheap compared to the cost of construction. But iterating on the overall direction of a complex product is an expensive substitute for understanding the problems first.

Developing scalable B2B SaaS products is closer to “building a Sagrada Familia”, as one experienced Chief Product Officer quipped to me. She explained: “It’s never finished. But you’d better know what the cathedral looks like before you lay the first stone. It becomes terribly difficult to develop a scalable product later if you have initially built just something haphazardly.”

Unless you have the whole picture in mind when you start building, you won’t get there by accumulating small decisions. Each compromise bakes itself into the foundation, the prototype hardens into the architecture, and the architecture determines what you can build for the next 5-10 years.

Ideas-first innovation

How did product development in general end up here? The root cause, I believe, is that much of the industry operates under an “ideas-first” approach to innovation: someone has an idea for a product or feature.

The industry has recognised that most ideas fail. Product discovery and Lean Startup respond by urging teams to validate the idea earlier, before committing full resources. Build a prototype, test it with customers, learn, iterate. This was a real improvement over building the full product blindly.

But it remains ideas-first. The question is still “is this idea any good?” rather than “what are the actual customer problems, and what solution follows from understanding them?”

The technology available to the team also shapes their thinking about what the solution could even be. Teams build what they know how to build, not what the customer problem demands. In the worst cases, the starting point is a technology the team wants to apply: “we want to create a product using blockchain”, or these days “we want to build an AI agent for X”. The customer problem becomes an afterthought.

The consequences get worse as the product grows more complex.

You can discover problems. You can’t discover solutions.

There is an asymmetry between the two that mainstream advice ignores.

Customer problems, in the structured sense above, exist as facts in the customer’s world, regardless of their current solutions. They can be literally discovered. You go to the customer’s world, observe, investigate, ask questions, and understand what you find. Customer problems are the explanation for why customers currently do what they do. The problems are out there, waiting to be found, like facts about geography, biology, or physics.

Solutions are a different category of thing. A solution doesn’t exist until it is invented. You cannot “discover” something that doesn’t exist. A product must be brought into existence through invention, ideally grounded in systematic reasoning from the facts you have discovered.

To emphasise the distinction, consider an analogy from science. Laws of nature are discovered. For example, electromagnetism existed in the world before it was studied. It was discovered through the work of researchers such as Ampère, Coulomb, Faraday, and Gauss. Maxwell unified electromagnetism into a mathematical theory, which explained a wide range of phenomena. Systematic reasoning from his famous equations implied the existence of electromagnetic waves. They were later experimentally confirmed by Hertz and enabled Marconi and others to invent the radio, a practical technology product.

Discovery and invention are different kinds of intellectual acts.

I have written before about the importance of this distinction between discovering customer problems and inventing solutions. It is not wordplay. It shapes how product teams organise their work, what they ask in customer conversations, and what kind of output they aim for.

What customers actually tell you

When you ask a customer about their needs, you almost always get feature requests and pain points. Occasionally a customer describes the actual situation they are in. A hotel manager might say “we need to make sure rooms are ready when guests arrive, even when many rooms are vacated the same morning”. That’s a legitimate description of an underlying situation. But you can’t count on it, and even when it happens, the customer rarely provides enough details that are required to design the best solution.

This is not a failure on the customer’s part. They don’t know what kind of input is needed to design a good product, and there is no reason they should. Most product teams don’t know either because the difference between pain points, desired solutions and underlying customer problems is not understood.

“We need to export data to Excel faster”

One illustrative example has stuck with me for years:

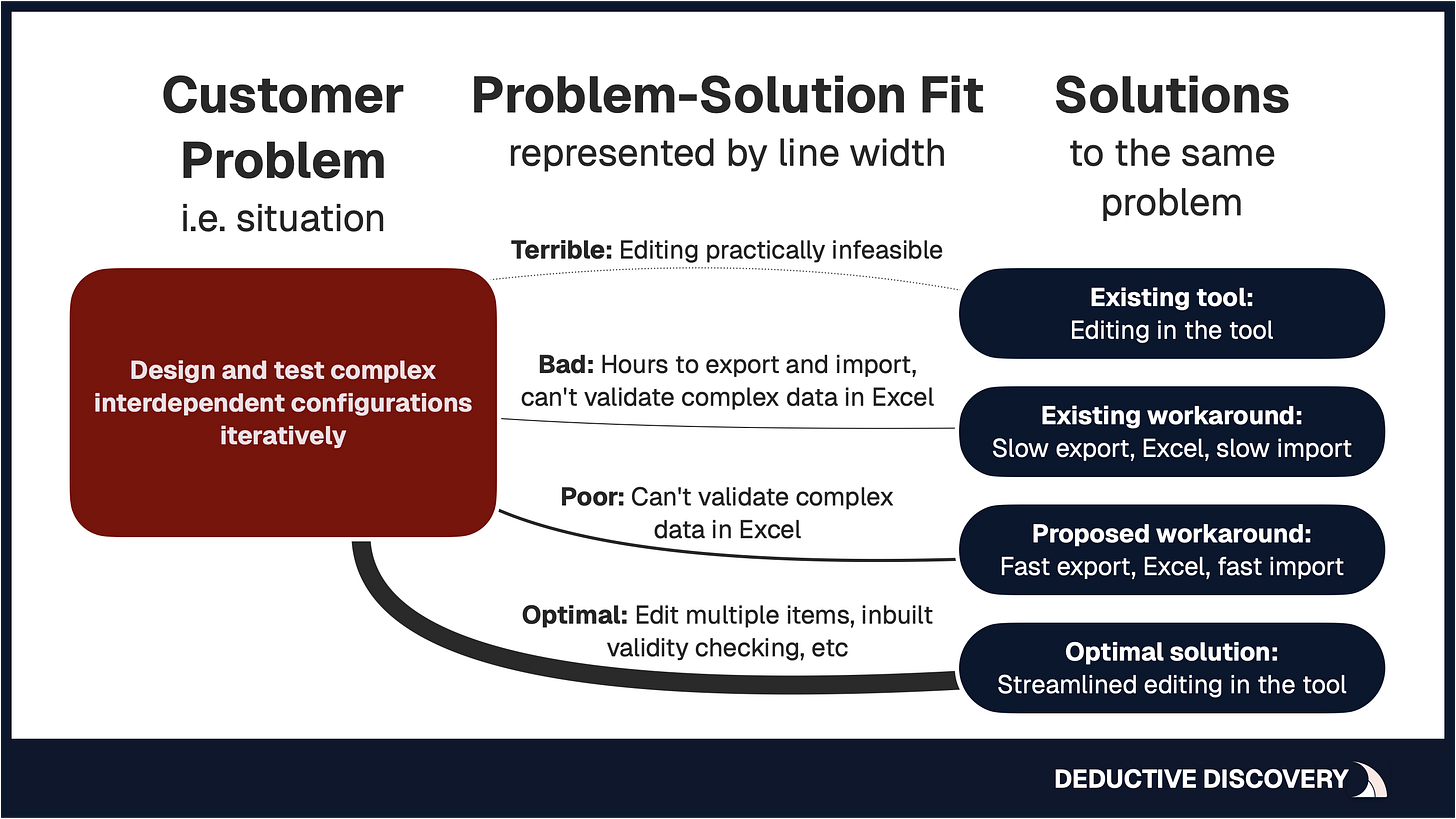

A frustrated telecom company contacted its software vendor. They used the vendor’s product to design and develop certain kinds of configurations, but importing and exporting the configuration data was taking hours. This seriously undermined their team’s productivity, so they demanded a faster import/export feature. It looked like a clear customer problem with an obvious solution. The table below summarises the situation.

When I investigated the real situation behind what the customer asked for, the right solution turned out very different.

The engineers using the tool needed to design and test complex interdependent configuration changes iteratively. The software didn’t support this kind of work properly, because editing a large number of configurations in the tool was practically infeasible. So the engineers had found a workaround: they exported all the data to Excel, made their changes there, and imported it back, hoping that the changes didn’t break the validity of the configurations.

The slow import/export wasn’t the actual customer problem. It was the most visible symptom of a poor fit between the workaround and the underlying situation. And the workaround was used because the tool didn’t address that situation properly. Building faster import/export meant building a better workaround, not solving the underlying problem.

The real solution was a user interface that lets engineers edit and test large, complex configurations directly: editing many items simultaneously in the same view, temporarily allowing inconsistent configurations, highlighting invalid dependencies, and so on. It was the optimal solution for all customers in the same situation.

Designing and building that solution would have taken too long, though, and the customer’s issue was too urgent to ignore. As often happens in enterprise software, engineering resources went into improving the workaround. Wasting development resources on temporary fixes and better workarounds is a common consequence of having created the wrong solution in the first place.

The four alternative solutions to the same underlying customer problem are summarised in the image below.

This pattern repeats across B2B software: what customers ask for and what would actually solve the underlying problem rarely resemble each other.

It’s not the customer’s job

“It’s not the customer’s job to know what they want.”

–Steve Jobs7

Steve Jobs’s line is usually read as a comment on solutions: customers can’t tell you what to build. True enough. There’s a parallel point about problems. It’s also not the customer’s job to know which facts about their world a product team needs to design and build a great product for them.

Think about it from their side. They don’t know that a customer problem expressed as a concrete situation enables systematic product design. They don’t know that events beyond their control, dependencies between different people’s tasks, hard physical constraints, examples of their data, or the criteria they use to judge results are specific facts a skilled product developer needs. Why would they know this? Product development is not their domain.

Compare this with medicine. When you visit a doctor, you describe your symptoms: headache, fatigue, fever. You don’t diagnose yourself. You don’t order blood tests. You don’t prescribe medication. The doctor knows which questions to ask, which symptoms to follow up on, and what tests to run. The patient provides raw information; the doctor interprets it and determines the diagnosis and treatment.

Software product development should work the same way. Customers provide access to their world: their activities, their workflows, their data, their goals, their frustrations. The product team’s job is to know what to look for, what questions to ask, and how to interpret what they find. Discovering customer problems requires the product team to understand what facts about the customer’s situations matter, and to go investigate those facts systematically.

The widespread advice to “listen to the voice of the customer” is well-intentioned but subtly misleading. Yes, talk to customers. Yes, observe them. Yes, learn from them. But don’t expect them to hand you a ready-made description of their problems. And definitely don’t expect them to hand you a good solution. Both are your job.

When teams skip this work, the result is trial and error labelled as discovery: collect feature requests and pain points, ideate in a workshop, build a prototype, react to lukewarm feedback, adjust, repeat. Customers can react to a prototype you put in front of them, but the reaction is based on their superficial impressions and expectations shaped by existing tools. They cannot reliably judge solutions to problems that neither party has fully understood or clearly articulated.

The cycle is cheap enough for small, simple products. For large and complex ones, the economics change dramatically.

Why this hits B2B software hardest

Much product discovery advice is generic, written as if it applies equally to a consumer mobile app, a kitchen gadget, and an enterprise planning system. B2B software is different in ways that make the conflation described above especially damaging.

The problem space is large and complex

B2B software typically addresses business processes involving multiple roles, interdependent activities, regulatory constraints, and deep domain knowledge. Systems like an assortment planning tool for retailers, a fulfilment system for telecoms, or a compliance platform for financial services involve dozens of interconnected subproblems spanning multiple teams and stakeholders. A few customer interviews won’t reveal the full picture.

Even for consumer products, talking to just a handful of people is risky. For B2B, where a single customer organisation might have fifteen different roles interacting with the same process, and the overall process may run for months, surface-level research is guaranteed to miss something critical.

The domain is hard to understand from the outside

Product developers building software for reinsurance, pharmaceutical development, or retail supply chain planning are almost never domain experts themselves. They can’t rely on intuition to fill gaps in their understanding. They must invest in learning the domain in detail, which is exactly what systematic problem discovery provides and what quick-and-dirty “discovery sprints” skip.

Buyers and users are different people

In B2B SaaS, the person who signs the purchase order is typically not the person who uses the software eight hours a day. A VP of Operations buys the tool; warehouse staff operate it. Their views on “the problem” may be completely different. Feature requests from the buying side often have little to do with what makes the product valuable in actual use. And conversations with users won’t surface the goals that drive the business as a whole, or strategic concerns that shape purchasing decisions.

Software is abstract and unconstrained

Unlike a physical product, where the constraints of materials and physics naturally narrow the design options and thus affect what problems can be solved, software can be “anything”. If you can describe a logical way for it to work, it can in principle be built.

This infinite solution space is both the great advantage and the great danger of software. Without a thorough understanding of the problem to narrow the options, a team can wander the solution space indefinitely, building plausible-looking prototypes that never converge on the right answer.

Iteration of the whole is infeasible

This is the biggest issue. Iteration of the whole B2B solution is infeasible. Building even a basic version of a complex B2B SaaS product takes months. A complete enterprise product takes years. Each iteration cycle (design, build, deploy, customise, get customers to actually use it in real conditions, gather meaningful feedback, redesign) is prohibitively expensive.

You can iterate on individual parts of the product. But if the overall direction is wrong (i.e. you are solving the wrong problem or solving it at the wrong level), no amount of iterating the parts will fix it. It’s like discovering that a building’s foundation is in the wrong place and trying to fix the situation by rearranging the furniture.

“Will they buy it?” mixes up two different questions

There is a related conflation worth mentioning. Marty Cagan’s question for value risk (“Will customers buy it?”) mixes two very different concerns. Will the product create real value for customers by solving their problems well? And can you price, market, and sell it successfully? These are not the same thing.

I have written previously about how Product-Market Fit breaks down into two distinct components: Problem-Solution Fit (does the product solve the right customer problems well?) and Go-to-Market Fit (can you reach and sell to the right customers?). Common product discovery advice doesn’t make this distinction because the nature of customer problems isn’t generally understood.

Yet the same understanding of customer problems that lets you design a good solution also feeds pricing strategy, value propositions, and sales messaging. When problem discovery is thin, both the product and the commercial strategy suffer; and the product team can’t tell which one is failing.

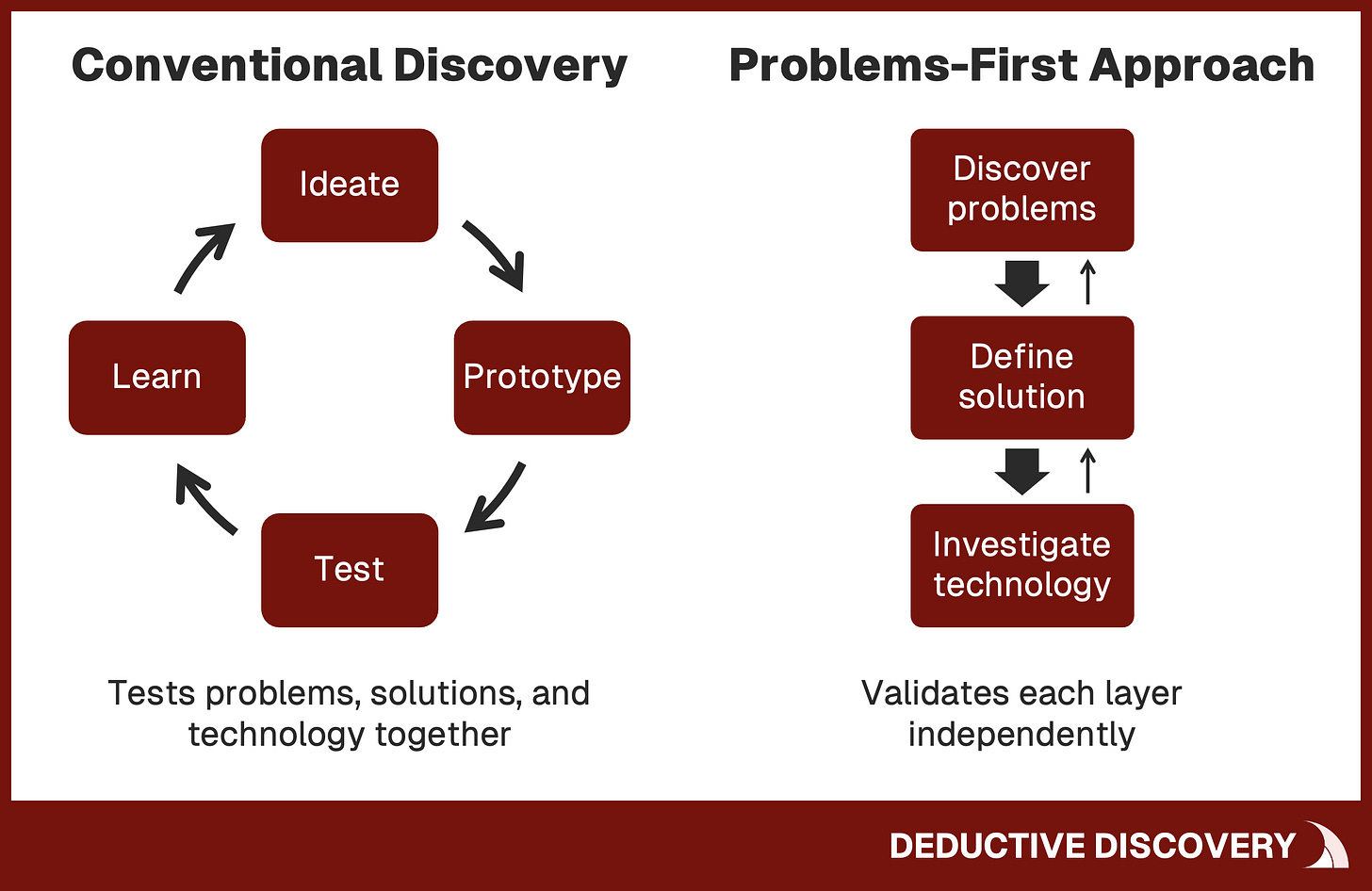

Separating what should be separate

If blending problem discovery, solution definition, and technology investigation into one iterative loop doesn’t serve complex B2B SaaS well, what does?

The alternative is conceptually straightforward: treat these three activities as distinct layers that build on each other, and do them in the right order.

1. Discover customer problems

First, discover customer problems. Go into the customer’s world and systematically investigate what situations they face, what they are trying to accomplish and why, what constraints and dependencies exist, what the relevant domain concepts are, and how they judge whether an outcome is good. Document this with concrete, specific examples: real roles, real context, real data.

Then validate your understanding directly with customers: “How accurately does this describe your situation?” Customers can reliably confirm or correct descriptions of their own reality, even when they can’t articulate the ideal solution.

Do this before thinking about what the product should be like. The right solution depends completely on the customer problems and their validity. If the problems turn out to be invalid, it pulls the rug out from under everything else: the solution, its implementation, and the product’s positioning and messaging.

I have described this kind of investigation in my earlier articles. Ten foundational elements of every customer problem (the target customer, their goal, the timing, the constraints, the evaluation criteria, and more) give you the starting point for what to discover. The 8 universal principles that govern customer problems help you recognise what you are looking at when you find it. I’ll be writing more about the practical methods of problem discovery in upcoming articles.

2. Invent the solution

Next, define the solution by reasoning from the validated customer problems. Once you understand and have concrete examples of what customers are trying to accomplish, in what circumstances, what unchangeable constraints exist, what dependencies are in play, and what criteria they use to judge results, you have the raw material to reason about what an optimal solution would look like.

This is analytical and inventive work that product teams must do. The solution follows from the customer problems as a mathematical result follows from the equations: through a systematic reasoning process.

This doesn’t mean there’s always only one viable solution. But understanding the problem in structural detail dramatically narrows the space of good solutions. The best among them can be identified through analytical comparison rather than guesswork, even before building anything. “Best” here means best at solving the validated customer problems, the dimension that I call Problem-Solution Fit. Whether that solution also wins commercially depends on Go-to-Market factors (pricing, positioning, messaging, sales, friction in adoption) that are a separate challenge.

3. Investigate technology

Investigate technology separately. With a clear picture of what the solution should do from the customer’s perspective, engineers can assess feasibility, research relevant technologies, prototype uncertain components, and plan the implementation. This is distinct work with its own methods and expertise, and it depends on knowing what the solution needs to accomplish.

A logical sequence, not a rigid one

The sequence is important because each activity validates the foundation for the next. Validate the problem descriptions with customers before designing solutions. Evaluate solution concepts against validated problems before committing to development. Assess technical feasibility once you know enough of what the solution must do. Each layer is confirmed before you build on top of it.

This sequence is logical, but not rigidly sequential for two reasons.

Each transition serves to test the completeness of the previous activity. When you start reasoning about the solution based on your validated problem understanding, you may find some gaps, like situations you didn’t explore, dependencies you don’t really understand, or evaluation criteria you didn’t catch. That’s a signal to investigate customer problems further, not to start iterating on prototypes.

Solution definition and technology investigation may overlap. As you reason through what the product must do to solve the customer problems, specific requirements emerge one at a time. When a particular requirement raises a technical question, engineers can start investigating it in parallel. You still need to first know what the solution must accomplish before you can assess how to accomplish it. But because individual requirements become clear progressively, feasibility work doesn’t have to wait for the whole solution to be defined.

Both kinds of backtracking are completeness checks, driven by the product team’s own analysis of where the foundation needs strengthening before building on top of it. They differ from iterative trial and error, where the team can’t tell which layer is at fault when a prototype falls flat. The sequence I’m describing eliminates wasteful trial-and-error iteration. It doesn’t require getting everything perfect on the first pass.

Problems-first innovation

Earlier I described how the industry operates under an “ideas-first” approach to innovation: start with an idea for a product or feature, then validate it through iteration.

The sequence I’ve described here is its opposite: a “problems-first” approach. Start by discovering the customer problems as facts. Then deduce what the optimal solution must be. Solution ideas are the output of the process, not the input.

The methodology that I have developed to implement this problems-first approach is called Deductive Innovation. It’s “deductive” because solutions are deduced from validated customer problems, rather than guessed at via iteration. By deduction I mean systematic reasoning from validated facts: when you know what customers are trying to accomplish, why, in what circumstances, under what unchangeable constraints, and by what criteria they judge results, the range of good solutions narrows and analytical reasoning about alternatives becomes possible.

I’ve been developing this approach for over 20 years of working with B2B software, and I’ve seen it produce results that iterative methods consistently fail to achieve: a small team builds the right product that solves the right problem from the start, and customers recognise the solution as superior from the moment they see it.

I’ve described the methodology and its results in previous articles, including a series on market prediction that shows how a thorough understanding of customer problems lets you see where B2B markets are heading years in advance.

“Isn’t this just waterfall?”

This is the first objection everyone raises, and it deserves a direct answer.

No. The resemblance to waterfall is superficial.

Waterfall failed because it asked customers to specify what the system should do. That is asking them to define the solution upfront. Customers can’t do this reliably, and it’s not their job. So the specifications turned out wrong, and after a year or two of development, teams discovered they had built the wrong thing. There was no intermediate validation, no reliable way to catch mistakes early.

The approach I’m describing asks a fundamentally different question: “What situations do customers face and what are they trying to accomplish?” Customers can answer questions about their own reality. You are not asking them to design software.

The facts you discover can be checked with multiple customers before any solution design begins. Solution concepts can then be tested, first against those facts and then with customers, before engineering begins. Validation happens at every step, not just at the end.

Unlike waterfall’s rigid one-way progression, this approach expects you to return to earlier phases if and when gaps surface. If you start inventing the solution and realise your problem understanding has holes, you go back. The difference from waterfall is that this backtracking is driven by the product team’s own analytical process, not by customer rejection of a finished system.

The goal isn’t to eliminate iteration altogether. Once your solution is broadly on the right track, once you are on the right mountain, so to speak, iteration is valuable for refinement and polishing.

Deductive Innovation eliminates not all iteration, but all unnecessary iteration that comes from building on top of unvalidated assumptions. For complex enterprise software, where a single misguided iteration cycle can burn months and hundreds of thousands of euros, that difference is enormous.

So what?

Product discovery remains one of the most important concepts in modern product development. Its goals are exactly right: understand before you build, validate before you commit, and use evidence instead of opinion.

But the practice of product discovery mixes three activities that are better kept separate. This conflation does real damage, especially for complex B2B software where problems are large, understanding domains requires expertise, and iterating the whole product (often a product suite) is not feasible.

The path forward is to get clear about what you are actually doing at each stage.

When you are investigating customer problems, do that fully and well, without jumping to solutions. When you are inventing the solution, reason from what you have learned about the problems; don’t try to extract the answer from customers. When you are assessing technology, do it against a defined solution concept.

Discover problems. Invent solutions. Investigate technology. In that order.

If you’ve been building B2B software and finding that iteration cycles are slow and expensive, that customer feedback isn’t converging toward clarity, that your product keeps growing in complexity without growing proportionally in value, then the root cause may not be poor execution. The activities you are bundling together under “discovery” may need to be pulled apart and done properly, each in its own right.

I will continue exploring these ideas, methods, principles, and real-world results in upcoming articles. If you want to build B2B software products that customers recognise as valuable, and if you are tired of trial and error labelled as best practice, subscribe to this newsletter.

For more on discovering customer problems systematically, start with The 8 Universal Principles of Customer Problems in B2B SaaS and How to Define Customer Problems That Lead to Innovative B2B SaaS Products. For how deep problem understanding enables predicting where B2B markets are heading, see the market prediction series.

Paul Adams, Intercom: “Great PMs don’t spend their time on solutions”, May 2017, www.intercom.com/blog/great-product-managers-dont-spend-time-on-solutions/

Marty Cagan: “The End of Requirements”, SVPG, October 2013, www.svpg.com/the-end-of-requirements/

Teresa Torres: “Opportunity Solution Trees: Visualize Your Discovery to Stay Aligned and Drive Outcomes”, Product Talk, December 2023, www.producttalk.org/opportunity-solution-trees/

Ash Maurya: “The Problem With Problems”, Medium, June 2018, medium.com/lean-stack/the-problem-with-problems-50dd364ccb2b

Ash Maurya: “Find Better Problems Worth Solving with the Customer Forces Canvas”, Medium, August 2017, medium.com/lean-stack/the-updated-problem-interview-script-and-a-new-canvas-1e43ff267a5d

Marty Cagan: “Discovery – Problem vs. Solution”, SVPG, September 2020, svpg.com/discovery-problem-vs-solution/

This is a common rephrasing of what Steve Jobs actually said:

Some people say, “Give the customers what they want.” But that’s not my approach. Our job is to figure out what they’re going to want before they do. […] People don’t know what they want until you show it to them.

In Walter Isaacson: “Steve Jobs”, Little, Brown, 2011, chapter 42